There is something uniquely electrifying about walking into a room full of brilliant developers, grabbing an unhealthy amount of caffeine, and realizing you have only hours to build the future. That was the energy this weekend at the Google DeepMind Hackathon Chicago 2026.

I came into this event with a clear goal: reimagine how we interact with our computers. We spend hours every day switching between apps, losing context, and forgetting what we were working on 20 minutes ago. What if our OS actually understood what we were doing? What if we had a sidekick that could not only see our screen, but act on it?

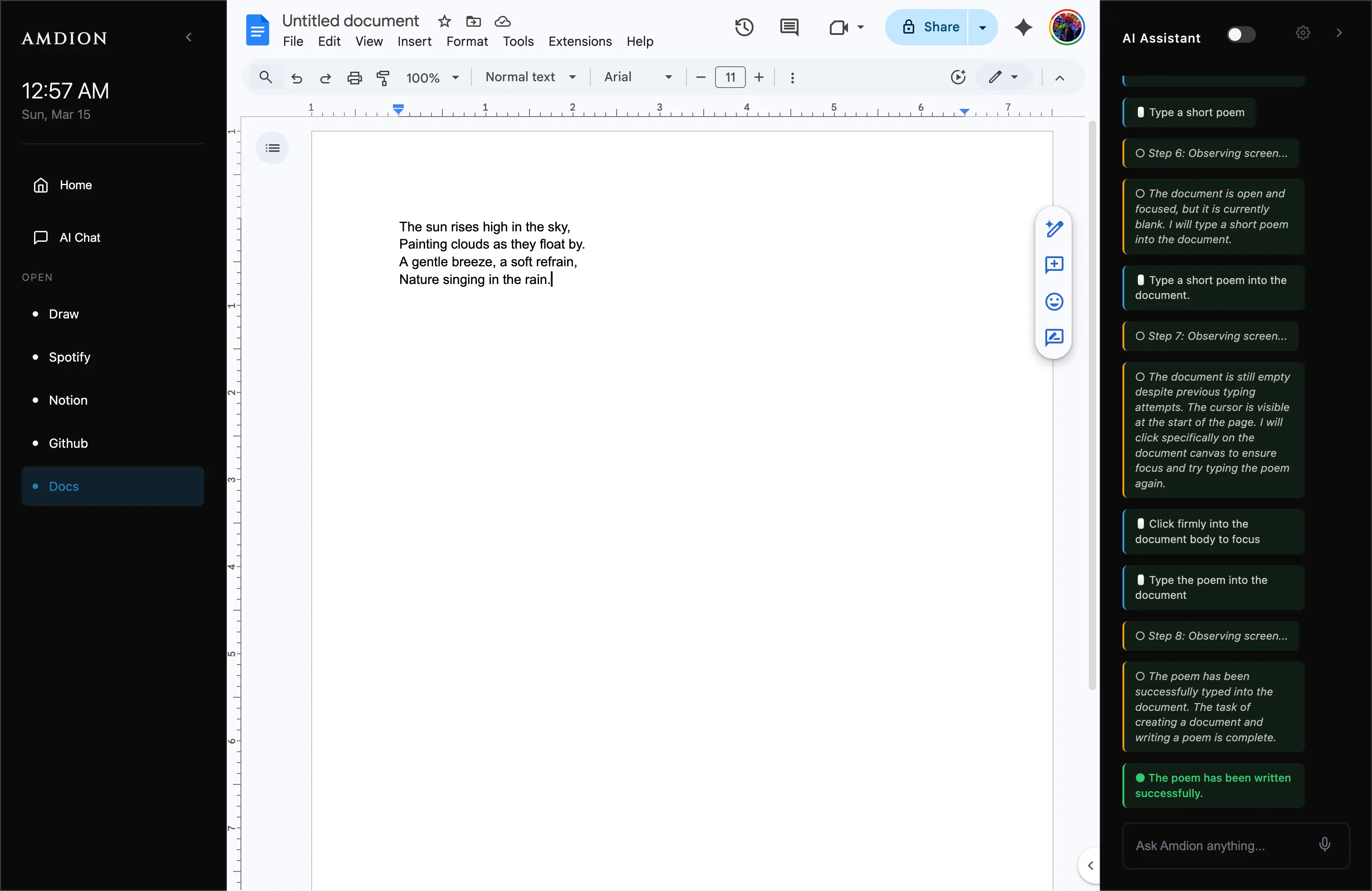

That question led to the creation of Amdion (Min-Comp).

The vision: a next-gen desktop assistant

Amdion is a local, AI-powered desktop assistant designed to live alongside your workflow. We wanted to build something that felt native, fast, and unintrusive.

Using Google’s Gemini 2.5 Flash, we built an architecture that could:

- See and understand: Amdion continuously (and privately) captures screenshots to build a context-aware journal of your day.

- Act and execute: Thanks to Gemini’s agentic computer-use capabilities, we integrated voice commands. You can literally say, “Hey, open Spotify and play some Bad Bunny,” and Amdion manipulates the UI to make it happen.

- Reflect and connect: At the end of a long coding session, Amdion generates a force-directed knowledge graph connecting the apps used, the topics researched, and how time was actually spent.

The stack and the struggle

Hackathons are never without hurdles. We chose Electron because we needed deep OS-level integration, specifically desktopCapturer to see the screen and BrowserView to render complex apps like Google Docs and Spotify natively inside our minimalist wrapper.

Integrating the Gemini API (@google/genai) was a dream. Its multimodal capabilities let us send screen images and get back structured JSON summaries of user focus.

The biggest challenge was voice input inside Electron.

We initially tried the Web Speech API, only to discover it is hard-blocked in Chromium without specific Google API keys baked into the binary. Down to the wire, we had to pivot entirely. We ended up writing a custom implementation using MediaRecorder to capture base64 audio blobs, sending them through an IPC bridge, and having Gemini transcribe the audio directly into text commands. It was a massive pivot that made the app significantly more robust.

The takeaway

There is a specific kind of magic that happens at 2:00 AM when a feature finally clicks into place. Watching Amdion successfully listen to my voice, transcribe it, analyze my screen, and autonomously navigate to play a song was one of the most rewarding engineering moments I have had.

The Google DeepMind Hackathon Chicago 2026 was not just about the prize or the code. It was about proving that the era of “dumb” operating systems is ending. Context-aware, agentic UI is the future of human-computer interaction, and I am incredibly proud of the prototype we built to prove it.

Huge shoutout to my colleague Venkat Roy for helping push this forward. We are hosting the installer on his site: amdion.org.

Watch the demo on YouTube: https://www.youtube.com/watch?v=CsZV4kS4XNs